If you were anywhere near the internet yesterday (let’s be honest, you were), you probably noticed major outages across Reddit, Figma, Roblox, and even parts of Amazon itself.

The root cause was simple: DNS errors in AWS’s US-EAST-1 region.

What does DNS do inside AWS?

At a high level, DNS (Domain Name System) is the internet’s address book.

It translates names like dynamodb.us-east-1.amazonaws.com into actual IPs.

Without it, computers and services simply don’t know where to send requests.

Inside AWS, that means:

EC2 instances resolving internal API endpoints

Lambda functions reaching DynamoDB tables or SQS queues

Control-plane systems talking to each other through internal service endpoints

When DNS resolution breaks, everything that depends on those lookups stops.

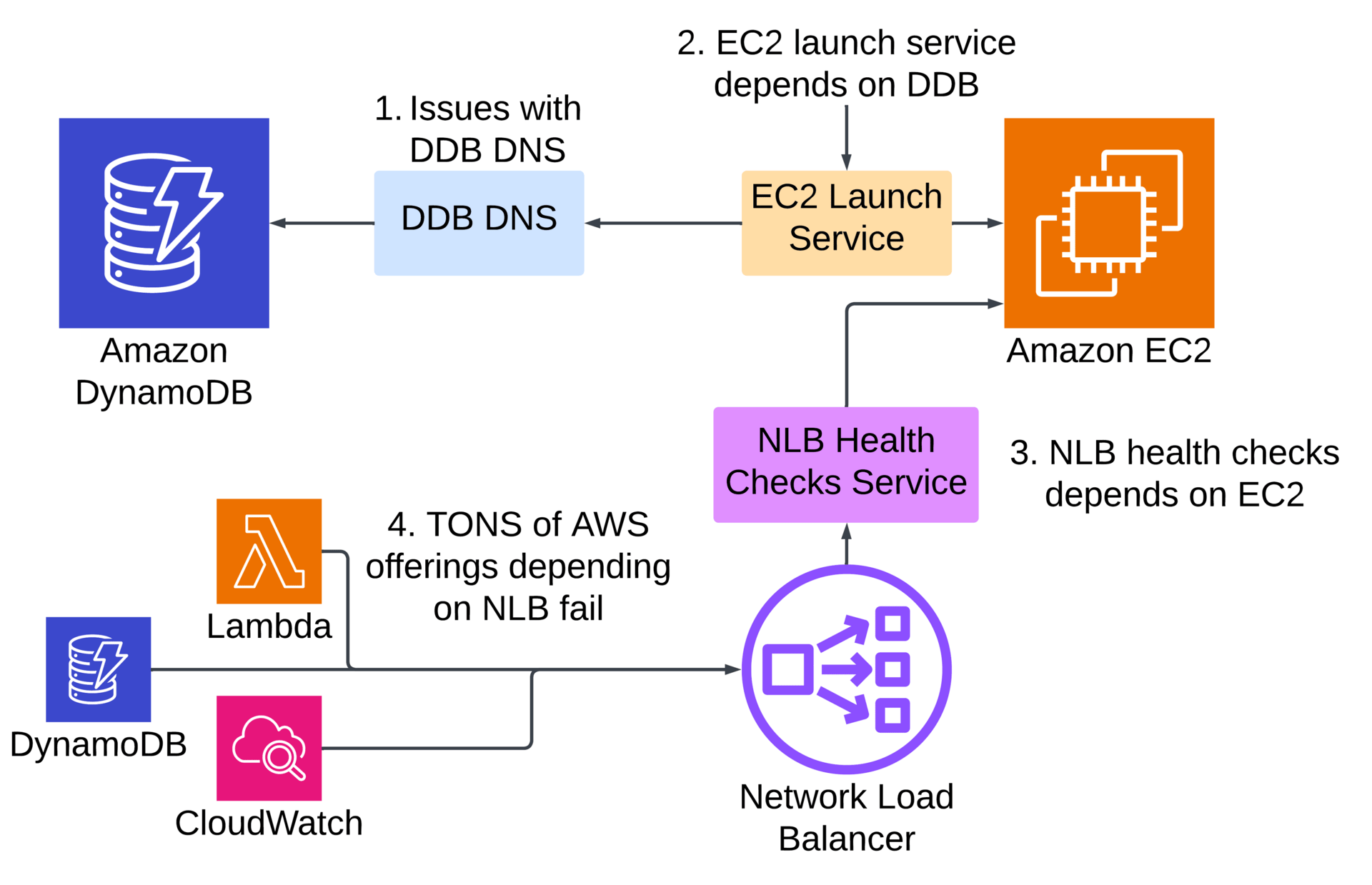

The internal dependency chain

According to AWS’s own incident report, the initial DNS failure caused DynamoDB service endpoints in us-east-1 to become unreachable.

That single failure cascaded:

EC2’s internal launch subsystem (which stores state in DynamoDB) failed.

That caused new EC2 instance launches to hang, including services that depend on EC2 (ECS, Glue, RDS).

Then Network Load Balancer (NLB) health checks began failing due to dependencies on those same internal EC2 components.

Those NLB failures triggered network connectivity issues across Lambda, CloudWatch, and DynamoDB itself.

By early morning, AWS began throttling new instance launches, Lambda event polling, and SQS processing to stabilize recovery, which is a common strategy when trying to bring failing services back online. It wasn’t until mid-afternoon that all services were back to normal.

The control plane vs. data plane problem

In AWS, the control plane manages and configures resources (like launching or updating instances), while the data plane runs the live workloads that actually serve traffic. While the data plane (your running EC2 instances or containers) mostly stayed up, the control plane was crippled. You couldn’t:

Launch new instances

Scale out workloads

Trigger new Lambda executions reliably

That’s why users saw existing services still responding, but new deployments or autoscaling events failing. This distinction shows up in almost every large AWS outage the running infrastructure survives, but anything that needs coordination fails.

Why the outage felt global

Even though it started in us-east-1, AWS noted that services relying on that region for global control operations (like IAM and DynamoDB Global Tables) were affected.

That’s because us-east-1 acts as a root control plane for several global services. When it fails, the ripple hits everywhere, even workloads technically hosted in other regions.

It was a reminder of how much of the cloud relies on shared control-plane dependencies, and how a small failure can cascade when those dependencies aren’t fully isolated.

AWS has promised a full post-event summary but here is my takeaway:

When the internal systems that manage the cloud depend on the same services they provide to customers, resilience gets recursive, and risk compounds.

See you next week.

- Arjay